Endoscopic tumor ablation of upper tract urothelial carcinoma (UTUC) allows for tumor control with the benefit of renal preservation but is impacted by intraoperative visibility. We sought to develop a computer vision model for real-time, automated segmentation of UTUC tumors to augment visualization during treatment.

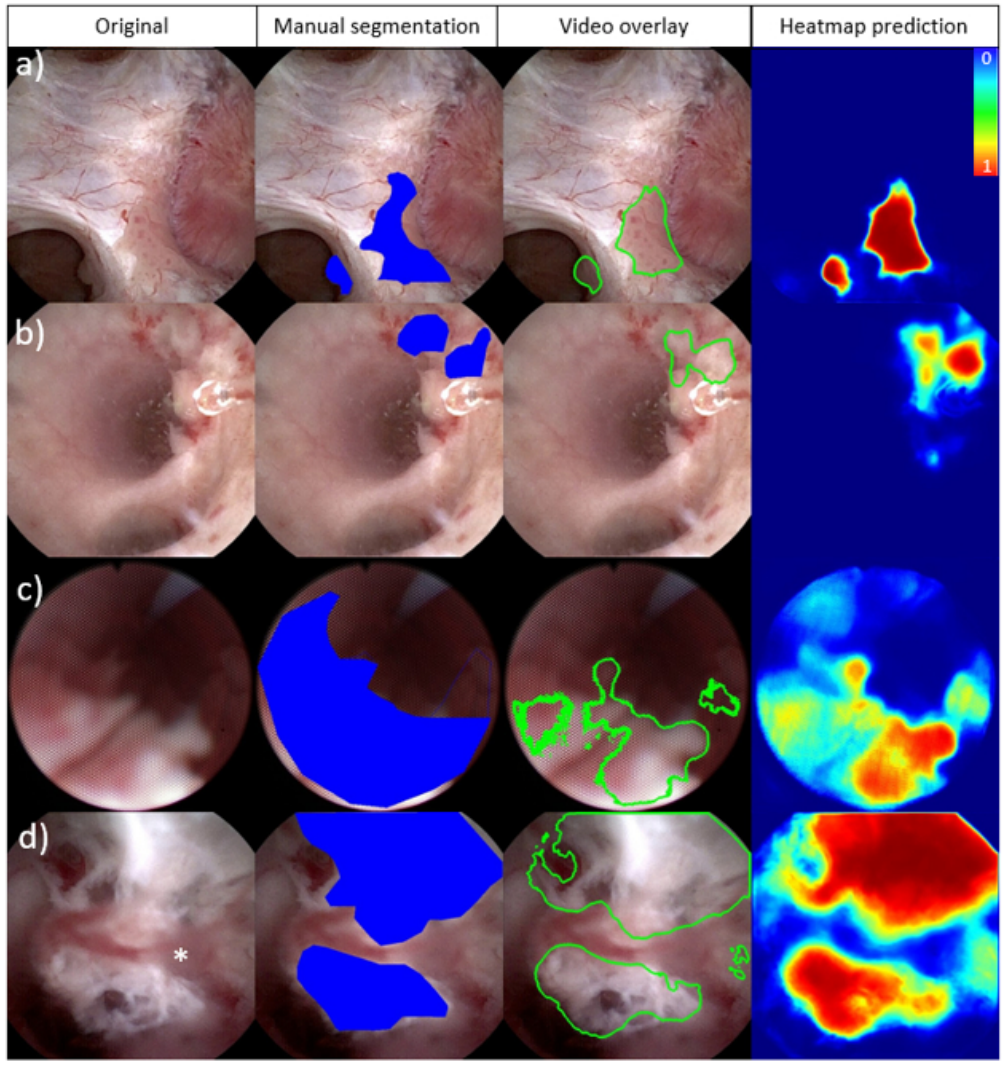

We collected twenty videos of endoscopic treatment of UTUC from two institutions. Frames from each video (N=3387) were extracted and manually annotated to identify tumors and areas of ablated tumor. Three established computer vision models (U-Net, U-Net++ and UNext) were trained using these annotated frames and compared. Eighty percent of the data was used to train the models while 10% was used for both validation and testing. We evaluated the highest performing model for tumor and ablated tissue segmentation using a pixel-based analysis. The model and a video overlay depicting tumor segmentation were further evaluated intraoperatively.

All twenty videos (mean 36 seconds ± 58s) demonstrated tumor identification and 12 depicted areas of ablated tumor. The U-Net model demonstrated the best performance for segmentation of both tumors (AUC-ROC of 0.96) and areas of ablated tumor (AUC-ROC of 0.90). Additionally, we implemented a working system to process real-time video feeds and overlay model predictions intraoperatively. The model was able to annotate new videos at 15 fps.

Computer vision models demonstrate excellent real-time performance for automated upper tract urothelial tumor segmentation during ureteroscopy.

To be updated when published.